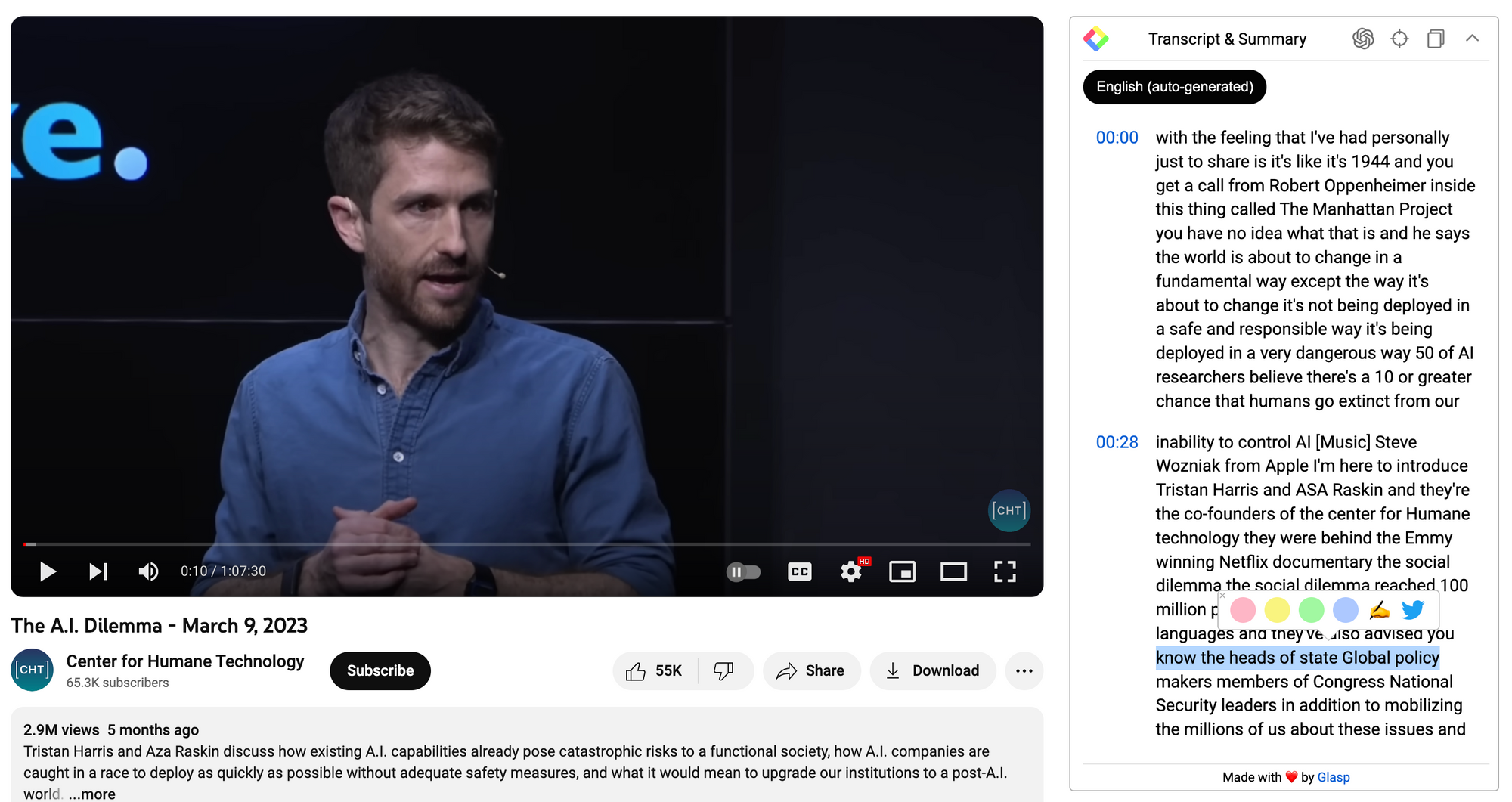

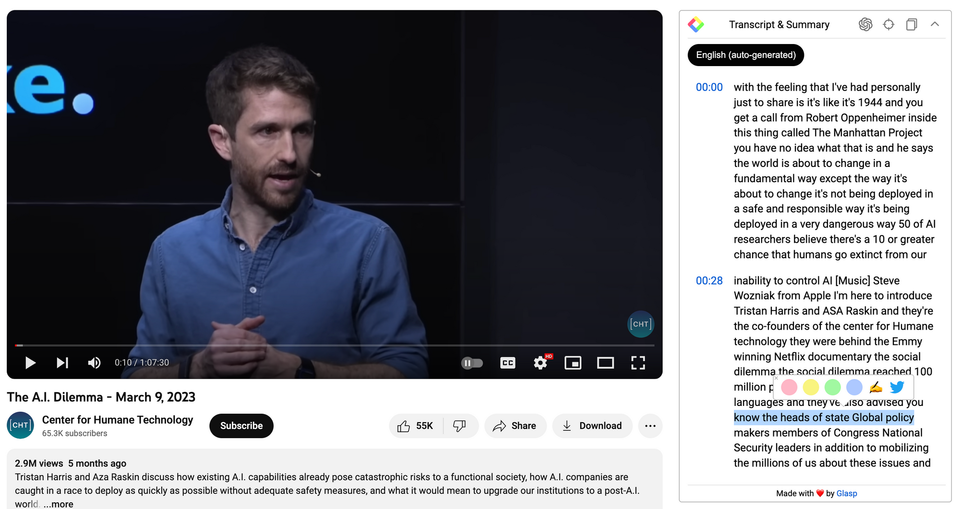

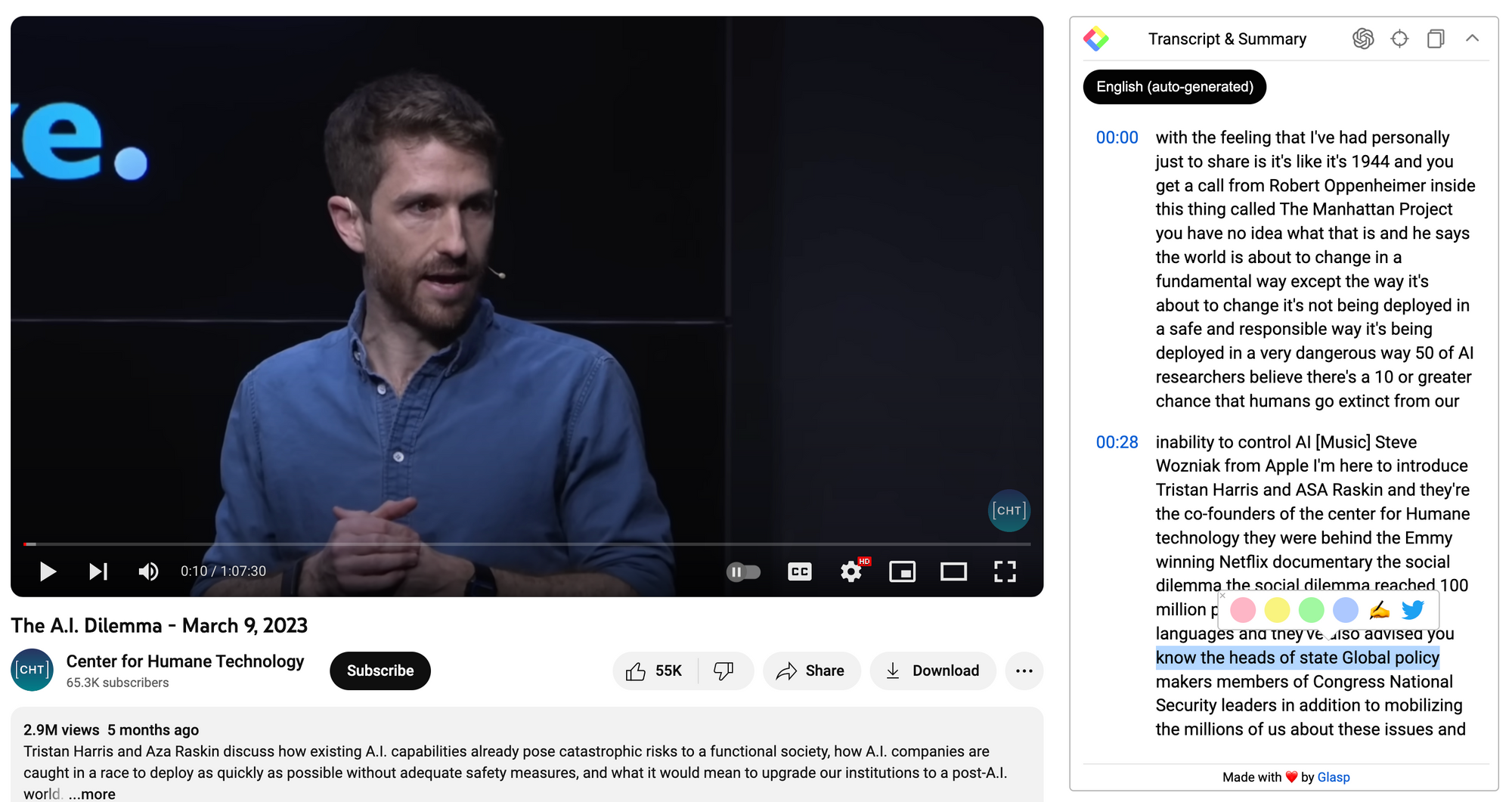

Tristan Harris and Aza Raskin - The A.I. Dilemma: Summary and Q&A

Introduction

In an era dominated by rapid technological advancements, this video delves deep into the transformative yet potentially hazardous world of Artificial Intelligence. Drawing parallels with pivotal moments in history, it underscores the urgency of understanding and responsibly navigating AI's exponential growth. Join us as we unravel the implications of our generation's most influential tool.

Summary

- The rapid advancement and deployment of AI technology can be likened to the momentous impact of the Manhattan Project, with concerns about its safe and responsible use.

- Tristan Harris and Aza Raskin, co-founders of the Center for Humane Technology and creators of the "The Social Dilemma" documentary, discuss the unpredictable and transformational nature of AI.

- Many AI researchers worry about the potential of humans losing control over advanced AI systems, leading to unforeseen consequences, termed "Golem class AIs".

- AI's rapid evolution is outpacing human understanding; some AI models now possess emergent capabilities beyond their original programming, and there are challenges in ensuring their safety and alignment with human values.

- There's an urgent need for global collaboration and coordination in regulating AI's deployment and evolution, similar to the way nuclear weapons were globally managed.

Q&A

Q: What was the key comparison made by the speaker to illustrate the dangers of AI?

A: The speaker likened the current situation with AI to getting a call from Robert Oppenheimer in 1944 about the Manhattan Project, suggesting that just as the world changed fundamentally with the development of nuclear weapons, it is about to change again due to AI. However, unlike the Manhattan Project, the current AI development is not being deployed in a safe and responsible manner, making it potentially more hazardous.

Q: Who are Tristan Harris and Aza Raskin and why are they significant in this context?

A: Tristan Harris and Aza Raskin are the co-founders of the Center for Humane Technology. They were influential in producing the Emmy-winning Netflix documentary "The Social Dilemma," which reached 100 million people worldwide. Their expertise and advocacy have led them to advise heads of state, global policymakers, Congress members, and national security leaders about the implications and dangers of technology, especially AI.

Q: How did the speaker illustrate the potential dangers of unchecked AI deployment?

A: The speaker posed a hypothetical situation: if a plane had a 10% chance of crashing, would you board it? The parallel drawn here is that we are rapidly onboarding people onto this metaphorical "plane" (AI) without fully understanding or addressing the associated risks.

Q: What is the main issue with the way new technologies, especially AI, are being developed and used?

A: Whenever a new technology is invented, it brings about a new class of responsibility that isn't always immediately obvious. For instance, YouTube's recommendation system, while improving personalized suggestions, unintentionally led users down "rabbit holes," resulting in the spread of misinformation and extremist views. The key point is that technological advancements can have unintended and sometimes harmful consequences.

Q: What are "Golem class AIs"?

A: Drawing from folklore, "Golem" refers to inanimate objects that suddenly gain unexpected capabilities. In this context, "Golem class AIs" denote AI models that exhibit emergent behaviors or abilities that weren't explicitly programmed into them. These capabilities can be both awe-inspiring and alarming, as we don't always fully comprehend their scope or potential impact.

Q: What breakthroughs in AI technology related to audio and imaging were highlighted?

A: The speaker highlighted that with just three seconds of audio of a person's voice, it's now possible to synthesize the rest. Also, using just Wi-Fi radio signals, AI can identify the positions and number of people in a room, even through walls and in complete darkness. These advancements raise privacy and security concerns.

Q: How is the rapid advancement of AI in testing visualized?

A: The speaker pointed out that initially, it took AI around 20 years to match human abilities in specific tasks. But by 2020, AI was outpacing humans, solving these tasks much faster. The rate of AI's progress is not linear but exponential, making it challenging to predict its future capabilities.

Q: Why is there a push for the "frantic" deployment of AI models like Chat GPT?

A: Companies are in a for-profit race, pushing new AI models into the world to gain market dominance. The deployment is so rapid that even potential dangers aren't fully assessed. It's likened to the reckless deployment of social media, which led to societal challenges that we're still grappling with.

Q: How did the speaker emphasize the importance of international cooperation in regulating AI?

A: The speaker compared the AI situation to nuclear proliferation, suggesting the need for a collective, global approach to its regulation. Just as global institutions were established to prevent nuclear war and promote peaceful coexistence, a similar multi-nation effort is crucial to ensure that AI's deployment benefits humanity without endangering it.

Q: What's the primary intention behind this presentation?

A: The speaker aimed to initiate a conversation about the potential pitfalls of AI, emphasizing the urgency of understanding and regulating its exponential growth. They hoped to create a shared understanding of the issues, drawing from past lessons with social media, to ensure that society doesn't repeat the same mistakes with AI.

Before you leave